eSeGeCe

software

eSeGeCe

software

ChatGPT Delphi Client (2 / 5)

OpenAI API allows to build your own AI Chats using ChatGPT Turbo. Using the sgcWebSockets library is very easy to interactuate with the API, given a chat conversation, the model will return a chat completion response.

ChatGPT Delphi Example

OpenAI requires to build a request were you pass the messages to sent to ChatGPT Turbo, the temperature (to get a more ore less random output... find below a list of the available parameters.

- model: (Required) ID of the model to use. See the model endpoint compatibility table for details on which models work with the Chat API.

- messages: (Required) The messages to generate chat completions for, in the chat format.

- temperature: What sampling temperature to use, between 0 and 2. Higher values like 0.8 will make the output more random, while lower values like 0.2 will make it more focused and deterministic.

- top_p: An alternative to sampling with temperature, called nucleus sampling, where the model considers the results of the tokens with top_p probability mass. So 0.1 means only the tokens comprising the top 10% probability mass are considered.

- n: How many chat completion choices to generate for each input message.

- stream: If set, partial message deltas will be sent, like in ChatGPT. Tokens will be sent as data-only server-sent events as they become available, with the stream terminated by a data: [DONE] message. See the OpenAI Cookbook for example code.

- stop: Up to 4 sequences where the API will stop generating further tokens.

- max_tokens: The maximum number of tokens to generate in the chat completion. The total length of input tokens and generated tokens is limited by the model's context length.

- presence_penalty: Number between -2.0 and 2.0. Positive values penalize new tokens based on whether they appear in the text so far, increasing the model's likelihood to talk about new topics.

- frequency_penalty: Number between -2.0 and 2.0. Positive values penalize new tokens based on their existing frequency in the text so far, decreasing the model's likelihood to repeat the same line verbatim.

- logit_bias: Modify the likelihood of specified tokens appearing in the completion. Accepts a json object that maps tokens (specified by their token ID in the tokenizer) to an associated bias value from -100 to 100. Mathematically, the bias is added to the logits generated by the model prior to sampling. The exact effect will vary per model, but values between -1 and 1 should decrease or increase likelihood of selection; values like -100 or 100 should result in a ban or exclusive selection of the relevant token.

- user: A unique identifier representing your end-user, which can help OpenAI to monitor and detect abuse.

Find below a simple example sending a message to ChatGPT-Turbo.

procedure SendMessageChatGPT(const aMessage: string);

var

i: Integer;

oMessages: TsgcOpenAIArray_Request_Completion_Messages;

oMessage: TsgcOpenAIClass_Request_Completion_Message;

oRequest: TsgcOpenAIClass_Request_ChatCompletion;

oResponse: TsgcOpenAIClass_Response_ChatCompletion;

begin

oRequest := TsgcOpenAIClass_Request_ChatCompletion.Create;

Try

// ... model

oRequest.Model := 'gpt-3.5-turbo';

// ... create message

oMessage := TsgcOpenAIClass_Request_Completion_Message.Create;

oMessage.Content := aMessage;

oMessages := oRequest.Messages;

SetLength(oMessages, 1);

oMessages[0] := oMessage;

oRequest.Messages := oMessages;

// ... send message

oResponse := OpenAI.CreateChatCompletion(oRequest);

// ... process response

for i := 0 to Length(oResponse.Choices) - 1 do

DoLog('[' + oResponse.Choices[i]._Message.Role + '] ' + oResponse.Choices[i]._Message.Content);

Finally

oRequest.Free

End;

End;

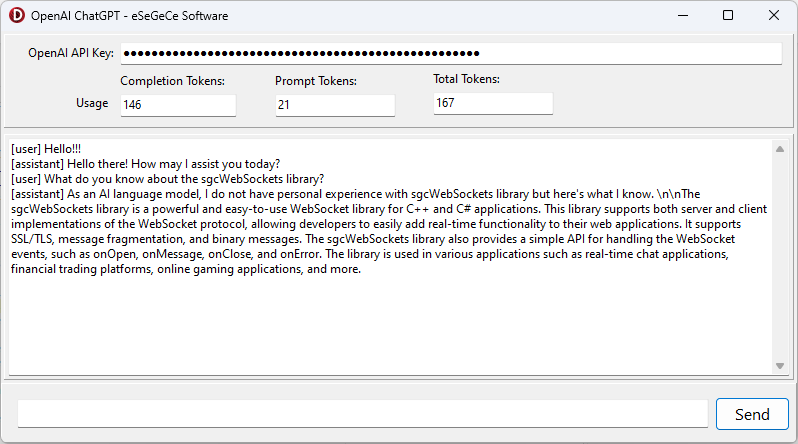

Find below the compiled Demo for Windows using sgcWebSockets OpenAI Delphi Library.

When you subscribe to the blog, we will send you an e-mail when there are new updates on the site so you wouldn't miss them.