eSeGeCe

software

eSeGeCe

software

Mistral Delphi API Client

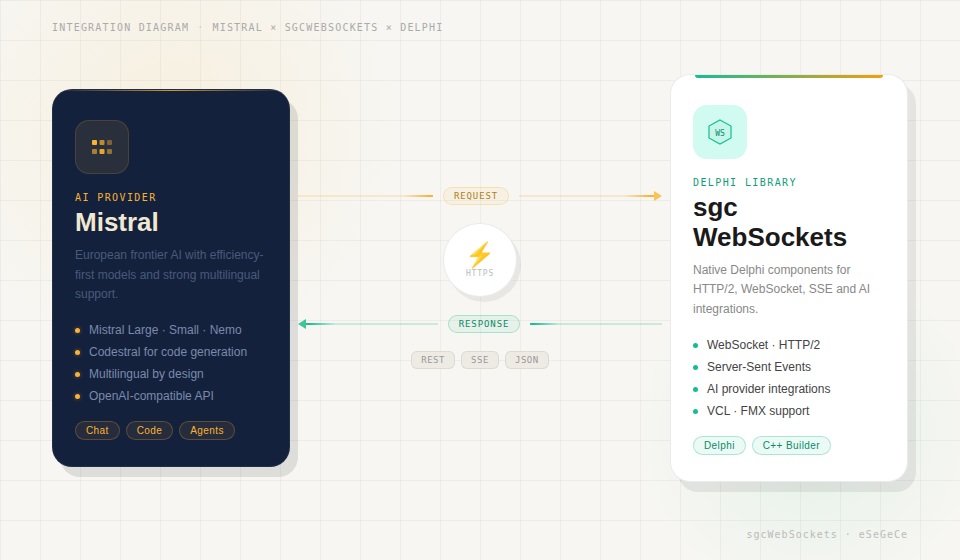

Mistral AI has established itself as a leading European AI provider, delivering high-performance models that excel at multilingual understanding, code generation, function calling, and structured outputs. For Delphi developers looking to integrate Mistral into their applications, sgcWebSockets provides TsgcHTTP_API_Mistral — a comprehensive, native component that wraps the entire Mistral API with clean, type-safe Delphi code.

Whether you are building intelligent chatbots, generating structured JSON outputs, analyzing images, creating embeddings for semantic search, or orchestrating tool-augmented workflows, this component gives you direct access to every Mistral feature. No REST boilerplate. No JSON wrangling. Just drop the component, set your API key, and start building.

Complete API Coverage

Every major feature of the Mistral API is supported out of the box.

|

Chat Completions Send messages with system prompts, receive responses synchronously or streamed. Full control over temperature, top-p, and stop sequences. |

Real-Time Streaming Stream responses token-by-token using Server-Sent Events. Build responsive UIs that display answers as they are generated. |

Vision Analyze images by sending base64-encoded data or image URLs alongside text prompts. Pixtral models describe, interpret, and reason about visual content. |

|

JSON Mode Force Mistral to return valid JSON output. Ideal for data extraction, structured responses, and API integration pipelines. |

Tool Use — Function Calling Define custom tools with JSON Schema. Mistral decides when to invoke them, enabling agentic, multi-step workflows. |

Embeddings Generate high-quality vector embeddings. Power semantic search, clustering, classification, and recommendation systems. |

|

Safe Prompt Enable Mistral's built-in safety guardrails with a single property. Automatically filter harmful or inappropriate content. |

Model Management List all available Mistral models programmatically. Query model IDs, owners, creation dates, and capabilities. |

Built-in Retry & Logging Automatic retry on transient failures with configurable attempts and wait intervals. Full request/response logging for debugging. |

Getting Started

Integrate Mistral AI into your Delphi project in under a minute. Drop the component, configure your API key, and send your first message.

// Create the component and configure the API key

var

Mistral: TsgcHTTP_API_Mistral;

vResponse: string;

begin

Mistral := TsgcHTTP_API_Mistral.Create(nil);

Try

Mistral.MistralOptions.ApiKey := 'YOUR_API_KEY';

// Send a simple message to Mistral

vResponse := Mistral._CreateMessage(

'mistral-large-latest', 'Hello, Mistral!');

ShowMessage(vResponse);

Finally

Mistral.Free;

End;

end;Two API styles. Every feature offers both convenience methods (string-based, minimal code) and typed request/response classes (full control, type safety). Choose the approach that best suits your use case.

Chat Completions & Streaming

The Chat Completions API is the foundation of every Mistral interaction. Send text with optional system prompts, and receive responses synchronously or streamed in real-time.

System Prompts

Control Mistral's behavior by providing a system prompt that sets the context, personality, or constraints for the conversation.

vResponse := Mistral._CreateMessageWithSystem(

'mistral-large-latest',

'You are a helpful assistant that responds in Spanish.',

'What is the capital of France?');

// Returns: "La capital de Francia es París."Real-Time Streaming

For responsive user interfaces, stream Mistral's response token-by-token using Server-Sent Events. Assign the OnHTTPAPISSE event handler and call _CreateMessageStream.

// Enable streaming via SSE

Mistral.OnHTTPAPISSE := OnSSEEvent;

Mistral._CreateMessageStream('mistral-large-latest',

'Explain the theory of relativity.');

procedure TForm1.OnSSEEvent(Sender: TObject;

const aEvent, aData: string; var Cancel: Boolean);

begin

// aData: JSON payload with generated content

Memo1.Lines.Add(aData);

end;Advanced Typed API

For full control over request parameters — temperature, top-p, stop sequences, random seed, safe prompt — use the typed request and response classes.

var

oRequest: TsgcMistralClass_Request_ChatCompletion;

oMessage: TsgcMistralClass_Request_Message;

oResponse: TsgcMistralClass_Response_ChatCompletion;

begin

oRequest := TsgcMistralClass_Request_ChatCompletion.Create;

Try

oRequest.Model := 'mistral-large-latest';

oRequest.MaxTokens := 2048;

oRequest.Temperature := 0.7;

oRequest.TopP := 0.9;

oRequest.SafePrompt := True;

oRequest.RandomSeed := 42;

oMessage := TsgcMistralClass_Request_Message.Create;

oMessage.Role := 'user';

oMessage.Content := 'Explain quantum computing in simple terms.';

oRequest.Messages.Add(oMessage);

oResponse := Mistral.CreateMessage(oRequest);

Try

if Length(oResponse.Choices) > 0 then

ShowMessage(oResponse.Choices[0].Message.Content);

Finally

oResponse.Free;

End;

Finally

oRequest.Free;

End;

end;Vision — Image Understanding

Mistral's Pixtral models can analyze and reason about images. Send photographs, screenshots, diagrams, or documents alongside a text prompt and receive detailed descriptions, data extraction, or visual Q&A.

// Load an image and ask Mistral to analyze it

var

vBase64: string;

begin

vBase64 := sgcBase64Encode(LoadFileToBytes('architecture-diagram.png'));

ShowMessage(Mistral._CreateVisionMessage(

'pixtral-large-latest',

'Describe the architecture shown in this diagram.',

vBase64, 'image/png'));

end;Use case. Automate document analysis, extract data from diagrams, classify images, or build visual understanding into your workflows — all from native Delphi code.

JSON Mode

Force Mistral to return valid, parseable JSON output. JSON mode is perfect for data extraction, structured API responses, and automated processing pipelines where you need guaranteed machine-readable output.

// Generate structured JSON output

vResponse := Mistral._CreateMessageJSON(

'mistral-large-latest',

'Extract the name, age, and city from: John is 30, lives in Madrid.');

// Returns: {"name": "John", "age": 30, "city": "Madrid"}

// Using the typed API for JSON mode

oRequest.ResponseFormat := 'json_object';Tool Use — Function Calling

Define custom tools with JSON Schema, and Mistral will decide when and how to invoke them. This is the foundation for building agentic, multi-step workflows that connect the AI to your business logic.

// Define a tool with JSON Schema

oTool := TsgcMistralClass_Request_Tool.Create;

oTool.FunctionName := 'get_weather';

oTool.FunctionDescription := 'Get the current weather in a location';

oTool.FunctionParameters :=

'{"type":"object","properties":{"location":{"type":"string",' +

'"description":"City and country"}},"required":["location"]}';

oRequest.Tools.Add(oTool);

oRequest.ToolChoice := 'auto';

oResponse := Mistral.CreateMessage(oRequest);

// Check if Mistral wants to call a tool

if oResponse.Choices[0].FinishReason = 'tool_calls' then

begin

for i := 0 to Length(oResponse.Choices[0].Message.ToolCalls) - 1 do

begin

vToolId := oResponse.Choices[0].Message.ToolCalls[i].Id;

vFuncName := oResponse.Choices[0].Message.ToolCalls[i].FunctionCall.Name;

vFuncArgs := oResponse.Choices[0].Message.ToolCalls[i].FunctionCall.Arguments;

// Execute the tool and return the result

end;

end;Embeddings

Generate high-quality vector embeddings for text using Mistral's embedding models. Embeddings power semantic search, document clustering, recommendation engines, and classification tasks.

// Generate embeddings for a text

var

vEmbedding: string;

begin

vEmbedding := Mistral._CreateEmbeddings(

'mistral-embed',

'Delphi is a powerful programming language.');

ShowMessage(vEmbedding);

end;For full control, use the typed API to batch multiple inputs and select the encoding format.

var

oRequest: TsgcMistralClass_Request_Embeddings;

oResponse: TsgcMistralClass_Response_Embeddings;

begin

oRequest := TsgcMistralClass_Request_Embeddings.Create;

Try

oRequest.Model := 'mistral-embed';

oRequest.Input.Add('First document to embed');

oRequest.Input.Add('Second document to embed');

oRequest.EncodingFormat := 'float';

oResponse := Mistral.CreateEmbeddings(oRequest);

Try

ShowMessage('Embeddings: ' + IntToStr(Length(oResponse.Data)));

ShowMessage('Tokens used: ' + IntToStr(oResponse.Usage.TotalTokens));

Finally

oResponse.Free;

End;

Finally

oRequest.Free;

End;

end;Safe Prompt

Mistral offers a built-in safety layer that can be enabled with a single property. When SafePrompt is enabled, a safety-focused system prompt is automatically prepended to filter harmful or inappropriate content.

// Enable safety guardrails

oRequest.SafePrompt := True;Reproducible results. Set RandomSeed to a fixed value to get deterministic outputs. Combined with Temperature := 0, this ensures identical responses for the same input — ideal for testing and validation pipelines.

Model Management

Query available Mistral models programmatically. List all models to discover new capabilities as they become available.

// List all available Mistral models

vModels := Mistral._GetModels;

// Typed API: access model properties directly

var

oModels: TsgcMistralClass_Response_Models;

i: Integer;

begin

oModels := Mistral.GetModels;

Try

for i := 0 to Length(oModels.Data) - 1 do

Memo1.Lines.Add(oModels.Data[i].Id + ' (by ' +

oModels.Data[i].OwnedBy + ')');

Finally

oModels.Free;

End;

end;Configuration & Options

Fine-tune the component behavior with comprehensive configuration options.

| Property | Description |

|---|---|

MistralOptions.ApiKey |

Your Mistral API key (required) |

HttpOptions.ReadTimeout |

HTTP read timeout in milliseconds (default: 60000) |

LogOptions.Enabled |

Enable request/response logging |

RetryOptions.Enabled |

Automatic retry on transient failures |

RetryOptions.Retries |

Maximum number of retry attempts (default: 3) |

RetryOptions.Wait |

Wait time between retries in milliseconds (default: 3000) |

Request Parameters

| Parameter | Description |

|---|---|

Temperature |

Sampling temperature (0.0–2.0). Lower values = more deterministic. |

TopP |

Nucleus sampling (0.0–1.0). Controls cumulative probability cutoff. |

MaxTokens |

Maximum number of tokens in the response (default: 4096). |

SafePrompt |

Enable built-in safety guardrails for content filtering. |

RandomSeed |

Fixed seed for reproducible outputs. Ideal for testing. |

ResponseFormat |

Set to 'json_object' for guaranteed JSON output. |

ToolChoice |

Control tool selection: 'auto', 'none', or 'required'. |

European AI advantage. Mistral is headquartered in France and offers EU-hosted inference, making it an excellent choice for organizations with European data residency requirements. Combined with the sgcWebSockets component's built-in retry logic, logging, and type-safe API, you get production-ready AI integration with full regulatory compliance.

Delphi Mistral Demo

When you subscribe to the blog, we will send you an e-mail when there are new updates on the site so you wouldn't miss them.